NARRATIVE/SYSTEMATIC REVIEWS/META-ANALYSIS

Assessment Criteria for Digital Health Interventions Focusing Among Healthcare Providers: A Scoping Review

Issam El Kouarty, MD1  , Samia El Hilali, MD, MPH2

, Samia El Hilali, MD, MPH2  , Abdelmajid Sahnoun, MD3

, Abdelmajid Sahnoun, MD3  and Majdouline Obtel, PhD2

and Majdouline Obtel, PhD2

1Faculty of Medicine and Pharmacy of Rabat, Mohammed V University, Rabat, Morocco; 2Laboratory of Biostatistics, Clinical and Epidemiological Research, Laboratory of Community Health, Preventive Medicine and Hygiene, Department of Public Health, Faculty of Medicine and Pharmacy of Rabat, Mohammed V University, Rabat, Morocco; 3Ministry of Health and Social Protection, Rabat, Morocco

Keywords: Assessment criteria, digital health intervention, eHealth, healthcare providers, telemedicine

Abstract

Background: Digital health interventions (DHIs) are increasingly integrated into healthcare systems and widely adopted by healthcare providers (HPs). The lack of consistent evaluation standards makes it difficult to identify high-quality DHIs. Here, the authors present an overview of the criteria used to assess DHIs, focusing on HPs.

Methods: A scoping review was conducted following the methodological framework proposed by Arksey and O’Malley and adheres to the PRISMA-ScR (Preferred Reporting Items for Systematic Reviews and Meta-Analyses extension for Scoping Reviews) guidelines. Searches were conducted in PubMed, Web of Science, Scopus, and Cochrane Library for English-language studies published between February 2019 and February 2024 that evaluated DHIs focusing on HPs.

Results: Of 1,774 articles reviewed, 15 met the eligibility criteria. Analysis revealed multiple approaches to assess DHIs, with no single consensus. Drawing on insights from 15 selected studies, we derived a unified, holistic framework to assess DHIs focusing on HPs, encompassing 29 criteria grouped into 10 key areas: data governance, usability, information management, health impact, patient-centered care, technical aspects, global context, functionality, sustainability, and design.

Conclusion: The framework offers a structured and comprehensive basis for assessing DHIs with a focus on HPs, supporting the optimization and sustainability of such interventions. Future studies could further explore and refine the framework across diverse contexts to strengthen its applicability.

Plain Language Summary

Many frameworks exist to assess digital health interventions (DHIs), but few are specifically designed for healthcare providers (HPs). The authors conducted a scoping review to identify and summarize the criteria used to assess DHIs focusing on HPs. Drawing on 15 studies, a comprehensive assessment framework was derived, grouping 29 criteria into 10 key areas, including usability, technical aspects, data governance, health impact, and patient-centered care. This framework offers a structured and practical approach to help HPs adopt effective DHIs and supports policymakers in promoting evidence-based, sustainable digital solutions that improve healthcare delivery and patient outcomes.

Citation: Telehealth and Medicine Today © 2025, 10: 629

DOI: https://doi.org/10.30953/thmt.v10.629

Copyright: © 2025 The Authors. This is an open-access article distributed in accordance with the Creative Commons Attribution Non-Commercial (CC BY-NC 4.0) license, which permits others to distribute, adapt, enhance this work non-commercially, and license their derivative works on different terms, provided the original work is properly cited and the use is non-commercial. See http://creativecommons.org/licenses/by-nc/4.0. The authors of this article own the copyright.

Submitted: September 11, 2025; Accepted: October 16, 2025; Published: December 6, 2025

Corresponding Author: Issam El Kouarty, Email: elkouarty.issam@gmail.com

Competing interests and funding: The authors declare that no competing interests exist.

This study was not supported by any sponsor or funder.

The World Health Organization (WHO) defines eHealth as the safe and cost-effective integration of information and communication tools into health practices for the purposes of surveillance, education, capacity building, and research.1 Building on this, the WHO defines digital health interventions (DHIs) as discrete functionalities of digital technologies that are applied to achieve specific health objectives.2 These include tools such as mobile health applications, telemedicine services, decision-support systems, and digital platforms, which are designed to improve communication, healthcare delivery, health system management, and patient outcomes.2

Depending on the primary target user, WHO classifies them into four intervention groups: clients, healthcare providers (HPs), health system or resource managers, and data services.2 DHIs should address gaps in the utilization and accessibility of health services, promote equity, and uphold the right to health, particularly in remote areas and among the elderly and disabled.2,3 Beyond client-facing solutions, DHIs focusing in HPs, such as telemedicine, and electronic health records are essential for strengthening existing health services, supporting HPs in clinical decision-making, improving care coordination, and ensuring continuity of care. These interventions must also follow robust security and privacy standards, rather than operating as stand-alone solutions.1

Given the rapid development of DHIs, HPs play a central role in selecting and implementing appropriate DHIs, which underscores the need for reliable evaluation criteria.4,5 Currently, the vast majority of DHIs are not rigorously evaluated and therefore cannot demonstrate their impact on health outcomes.6,7 For instance, a WHO European Region report found that more than half of member countries had not assessed their eHealth services.8 Evidence on evaluation criteria for provider-level DHIs remains limited,9 and the lack of consensus or international standards could negatively affect their adoption. Failure to consider key criteria such as validity, usability, and clinical accuracy may also compromise user safety and the effectiveness of care.10 This challenge is compounded by a rapidly expanding market of over 300,000 DHIs, with more than 200 new ones added daily, creating a crowded and complex landscape.10

Several studies addressed the assessment of DHIs, each contributing in different ways. In 2024, Janette Ribaut et al.11 developed criteria for evaluating eHealth tools across all primary target users. The 2023 study by Christine Jacob et al.10 assessed the quality and impact of eHealth tools, taking into account sociotechnical factors. In 2022, Franklin I. Onukwugha et al.12 evaluated the effectiveness of mobile Health interventions for all primary users. Meanwhile, the 2021 work by Kayode Philippe Fadahunsi et al.13 synthesized an information quality framework to assess DHIs within engineering and computer science, offering insights into technical evaluation approaches.

To date, no study has, to the best of our knowledge, comprehensively evaluated all types of DHIs specifically targeting HPs. This work provides an up-to-date review that synthesizes the evidence and describes criteria to assess DHIs for HPs, thereby supporting their reliable integration into clinical practice.

Methodology

Overview

A scoping review was conducted to map the current evidence in this field.14 The review adhered to the methodological framework proposed by Arksey and O’Malley15 and followed the PRISMA-ScR guidelines (Preferred Reporting Items for Systematic Reviews and Meta-Analyses extension for Scoping Reviews).16

Search Strategy

A comprehensive search was conducted in PubMed, Scopus, Web of Science, and Cochrane Library for studies published in English between February 2019 and February 2024. The research question was, “What is known from the existing literature about the criteria used to assess DHIs focusing on HPs?”

A Boolean search string was constructed using the Population/Problem, Intervention/Exposure, Comparator/Control, and Outcome (PICO) framework,17 with the following components: Participants (P): HPs; intervention (I): DHIs; comparators (C): not applicable; and outcome (O): assessment criteria. This search strategy was applied consistently across all databases. Details of the search strategy are provided in Table 1.

Eligibility Criteria

As shown in Table 2, the inclusion and exclusion criteria were structured following the PICO framework. We included original, peer-reviewed articles published in English between February 2019 and February 2024 that evaluated digital DHIs focusing on HPs as the primary users. Studies were excluded if they did not report an evaluation of DHIs, focused solely on the conceptual or engineering design of DHIs; were published as interviews, commentaries, unstructured observations, position papers, or editorials; or were part of the grey literature. Studies for which the full text was unavailable or not freely accessible were also excluded.

Selection Process

All studies retrieved from the databases were imported into Zotero, an open-source reference management tool, to organize citations and document screening outcomes.17 Three reviewers independently conducted title and abstract screening. Discrepancies were resolved through discussion, and if consensus could not be reached, a fourth reviewer was consulted. Following this, two reviewers independently assessed the full-text articles for eligibility. Studies not meeting the criteria were excluded, and any remaining conflicts were resolved through consultation with the other reviewers. Screening lasted from March to June 2024.

Data Extraction and Analysis

Two reviewers performed the initial extraction of data from the included studies, which was then independently checked by two additional reviewers. To reduce the risk of errors, a standardized data extraction form, developed collectively by all reviewers, was used. The form was piloted on five studies to confirm that it captured all relevant information. The final extracted data are presented in Table 3.

Given the heterogeneity of study designs and reported outcomes, a quantitative synthesis was not feasible. A narrative synthesis combined with thematic analysis was therefore conducted. Braun and Clarke’s approach to thematic analysis18 was applied to identify themes addressing the review question.

Evaluation criteria were categorized into areas such as technical performance, usability, sustainability, and data governance. New categories were added iteratively when emerging concepts did not fit existing areas. Extracted areas were then mapped against existing conceptual frameworks, overlapping categories were merged, and the final framework was validated through consensus discussions among the review team. The synthesis process was carried out from July to October 2024.

Study Assessment

Studies were assessed using the Critical Appraisal Skills Programme (CASP) Checklist19 and the Joanna Briggs Institute (JBI) Critical Appraisal Checklist.20

Results

Table 4 lists the studies that met the eligibility requirements and comprised the data set reported here.

| Reference | Locations | Study design | Types of DHI | Specialty or condition |

| Samia El Joueidi et al.21 | Canada | Mixed methods | Mobile Health App | Chronic diseases Maternal, Neonatal and Child Health HIV prevention Tuberculosis |

| Silvina Arrossi et al.22 | Argentina | Mixed methods | Mobile Health App | Oncology |

| Sarah Lagan et al.23 | United States | Mixed methods | Mobile Health App | General medical practices |

| Chelsea Jones et al.24 | Canada | Qualitative | Mobile Health App | Mild traumatic Brain injuries |

| Anna E. Roberts et al.25 | Australia | Quanlitative | Mobile Health App | Mental health |

| Ramy Sedhom et al.26 | United States | Qualitative | Mobile Health App | Oncology |

| Yong Yu Tan et al.27 | Ireland | Mixed methods | Health Websites and Mobile Health App | Diabetes |

| Fionn Woulfe et al.28 | United Kingdom | Mixed methods | Health Websites and Mobile App | COVID-19 |

| Lauren M. Little et al.29 | United States | Mixed methods | Health Websites and Mobile App | Ergotherapy (occupational therapy) |

| Noy Alon et al.30 | United States | Mixed methods | Mobile Health App | Mental health Hypertension |

| Kagiso Ndlovu et al.31 | South Africa | Mixed methods | Health Websites and Mobile App | General medical practices |

| Kamran Khowaja et al.32 | Qatar | Mixed methods | Mobile Health App | Diabetes |

| Vokinger Kerstin N. et al.33 | Switzerland | Qualitative | Health Websites and Mobile App | Mental health COVID-19 |

| Madeleine Haig et al.34 | United Kingdom | Mixed methods | Mobile Health App | Chronic diseases |

| Afua Van Haasteren et al.35 | United Kingdom | Quantitative | Mobile Health App | General medical practices |

| COVID-19: coronavirus disease 2019; DHI: digital health interventions. | ||||

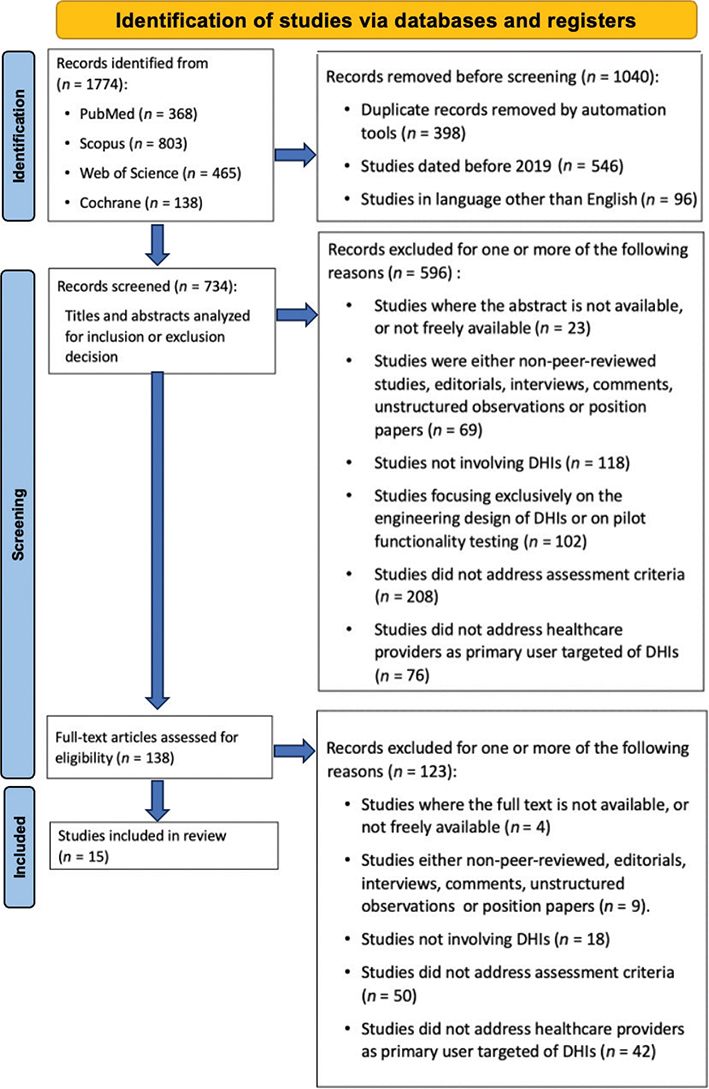

The initial search retrieved 1,774 studies: 803 from Scopus, 465 from Web of Science, 368 from PubMed, and 138 from Cochrane. After excluding 1,040 studies, 734 remained for screening. Following title, abstract, and full-text screening, 15 articles were included in the review. The selection process is summarized in the PRISMA flowchart in Figure 1.

Fig. 1. Article selection flowchart based on PRISMA guidelines. DHIs: digital health interventions; HPs: healthcare providers; PRISMA: Preferred Reporting Items for Systematic Reviews and Meta-Analyses.

Characteristics of Selected Studies

Of the 15 included studies, most were published in 2020 (6/15), followed by 2021 (4/15), 2022 and 2023 (2/15 each), and 2019 (1/15), thus covering a 5-year period. Geographically, the studies reflected broad international coverage, with 4/15 conducted in the United States, 3/15 in the United Kingdom, 2/15 in Canada, and 6/15 across other countries. Methodologically, the majority adopted mixed methods approaches (10/15), while 4/15 employed qualitative designs and only 1/15 a quantitative design. The medical domains addressed were diverse, including general practice and diabetes (3/15), mental health (2/15), chronic diseases (2/15), oncology (1/15), COVID-19 (1/15), and other areas (1/15).

Regarding technology, the included studies investigated diverse forms of DHI use, with the majority (10/15) focused on mobile applications, whereas 5/15 examined both mobile and web-based platforms. Furthermore, 12/15 studies relied on existing frameworks, while 3/15 proposed new ones. The characteristics of the included studies are presented in detail in Table 5.

| Study characteristic | References |

| Publication date | |

| 2023 (n = 2)* | 31,34 |

| 2022 (n = 2) | 27,28 |

| 2021 (n = 4) | 25,26,29,31 |

| 2020 (n = 6) | 23,24,30,32,33,35 |

| 2019 (n = 1) | 22 |

| Location | |

| United States (n = 4) | 23,24,29,30 |

| United Kingdom (n = 3) | 28,34,35 |

| Canada (n = 2) | 21,24 |

| Others (n = 6) | Argentina,22 Australia,25 Ireland,27 South Africa,31 Qatar,32 Switzerland33 |

| Study design | |

| Mixed methods (n = 10) | 21,22,27–32,34 |

| Qualitative (n = 4) | 24,25,28,33 |

| Quantitative (n = 1) | 35 |

| Specialty or condition | |

| General medical practices (n = 3) | 23,31,35 |

| Diabetes (n = 3) | 27,30,32 |

| Mental health (n = 2) | 25,33 |

| Chronic diseases (n = 2) | 21,34 |

| Oncology (n = 2) | 22,25 |

| COVID-19 (n = 2) | 28,33 |

| Others (n = 6) | Maternal-Neonatal-Child Health Programs,21 HIV prevention,21 Tuberculosis,21 Mild Traumatic Brain Injuries,24 Ergotherapy,29 Hypertension30 |

| Types of DHI | |

| Mobile Health App (n = 10) | 21–26,30,32,34,35 |

| Health Websites and Mobile App (n = 5) | 27,28,29,31,33 |

| Theoretical framework | |

| Pre-existing assessment framework (n = 12) | 21–31,35 |

| New assessment framework (n = 3) | 30,33,34 |

| DHIs: digital health interventions; n =: number of studies covering that disease. | |

Listing of Assessment Frameworks

Fifteen frameworks were identified. The most common was the Mobile App Rating Scale 6/15, followed by the Food and Drug Administration Precertification Program 4/15 (Table 6). When a framework was unnamed, the first author of the corresponding article identified it.

| Frameworks and guidelines | References |

| Mobile App Rating Scale (MARS) (n = 6) | 23–28 |

| Food and Drug Administration Precertification Program (FDA Pre-Cert) (n = 4) | 23,28,30,35 |

| Enlight Suite (n = 2) | 27,28 |

| American Psychiatric Association’s App Evaluation Model (AEM) (n = 2) | 23,25 |

| Consolidated Framework for Implementation Research (CFIR) (n = 2) | 21,22 |

| Modified Enlight Suite (MES) (n = 2) | 27,28 |

| Reach, Effectiveness, Adoption, Implementation, and Maintenance (RE-AIM) (n = 1) | 22 |

| Digital Health Scorecard (DHS) (n = 1) | 26 |

| Adapted Mobile App Rating Scale (A-MARS) (n = 1) | 25 |

| Population Access Costs Experiences (PACE) (n = 1) | 29 |

| mHealth-eRecord Interoperability Framework (mHERIF) (n = 1) | 31 |

| Heuristic Evaluation for mHealth Apps (HE4EH) (n = 1) | 32 |

| Kerstin Framework (KF) (n = 1) | 33 |

| Haig Framework (HF) (n = 1) | 34 |

| Mobile Health App Trustworthiness (mHAT) (n = 1) | 35 |

Synthesized Assessment Areas and Criteria

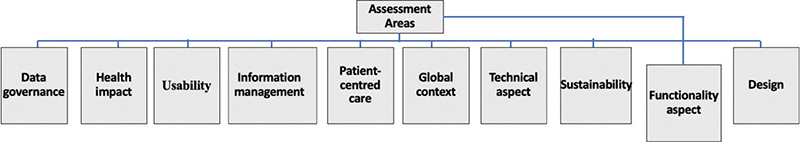

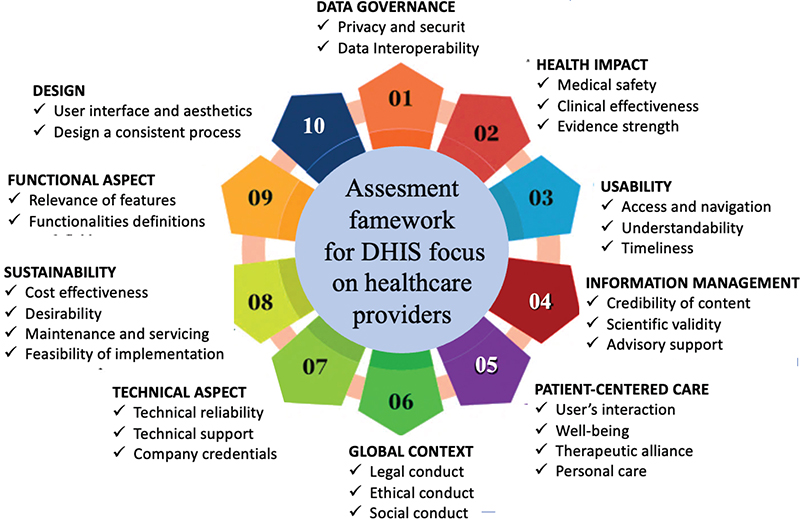

The various DHI evaluation approaches focusing on HPs, derived from the 15 frameworks, were categorized into 10 thematic areas (Figure 2), including data governance, health impact, usability, information management, patient-centered care, global context, technical aspect, sustainability, functionality aspect, and design.

Fig. 2. Overview of assessment areas.

Each of these assessment areas was divided into various subclasses containing, overall, 29 criteria. Criteria were grouped through cross-framework comparison, with sub-components assigned to the area that best reflected their main focus in most studies. Some items could fit multiple areas. “Legal and ethical conduct,” for instance, might belong to governance; however, we placed it in the global context as frameworks often related it to broader societal and cross-border issues. Similarly, “maintenance and servicing” was categorized under sustainability rather than technical aspects, as it mainly concerned long-term feasibility. Table 7 presents criteria ranked from most to least frequently cited for each thematic area, including key references and frameworks.

| Assessment areas, criteria, frameworks in which they appear | Studies citing | |

| Data governance | ||

| Privacy and security (n = 14) | CFIR, AEM, MARS, A-MARS, DHS, ESF, MES, PACE, FDA Pre-Cert, mHERIF, HE4EH, KF, HF, mHAT | 21,23–35 |

| Data interoperability (n = 8) | AEM, MARS, A-MARS, DHS, mHERIF, HE4EH, HF, mHAT | 23–26,31,32,34,35 |

| Health impact | ||

| Medical safety (n = 8) | MARS, A-MARS, DHS, MES, FDA Pre-Cert, mHERIF, HE4EH, mHAT | 24–26,28,30–32,35 |

| Clinical effectiveness (n = 5) | CFIR, RE-AIM, AEM, DHS, HF | 21–23,25,34 |

| Evidence strength (n = 4) | CFIR, AEM, PACE, mHAT | 21,23,29,35 |

| Usability | ||

| Access and navigation (n = 12) | AEM, MARS, A-MARS, DHS, ESF, MES, PACE, mHERIF, HE4EH, KF, HF, mHAT | 23–29,31–35 |

| Understandability (n = 3) | A-MARS, MES, mHAT | 25,28,35 |

| Timeliness (n = 2) | A-MARS, MES | 25,28 |

| Information management | ||

| Credibility of content (n = 7) | MARS, A-MARS, ESF, MES, mHERIF, HE4EH, KF | 24,25,27,28,31–33 |

| Scientific validity (n = 7) | AEM, MARS, A-MARS, DHS, ESF, MES, HF | 23–25,27,28,34 |

| Advisory support (n = 3) | DHS, PACE, mHAT | 23–29,34,35 |

| Patient centered care | ||

| Users’ interactions (n = 6) | MARS, A-MARS, ESF, MES, PACE, KF | 24,25,27–29,33 |

| Well-being (n = 5) | ESF, MES, PACE, HE4EH, mHAT | 27–29,31,35 |

| Therapeutic alliance (n = 4) | AEM, ESF, MES, PACE | 23,27–29 |

| Personalized care (n = 3) | MARS, A-MARS, HF | 24,25,34 |

| Global context | ||

| Legal conduct (n = 9) | CFIR, AEM, MES, PACE, FDA Pre-Cert, mHERIF, KF, HF, mHAT | 21,23,28,29,30,31,33–35 |

| Ethical conduct (n = 9) | CFIR, AEM, MES, PACE, FDA Pre-Cert, mHERIF, KF, HF, mHAT | 21,23,28–30,33–35 |

| Social conduct (n = 3) | CFIR, HF, mHAT, | 21,34,35 |

| Technical aspect | ||

| Technical reliability (n = 3) | MARS, A-MARS, HE4EH | 24,25,32 |

| Technical support (n = 3) | mHERIF, KF, HF | 31–33 |

| Company credentials (n = 2) | AEM, FDA Pre-Cert | 23,30 |

| Sustainability | ||

| Cost-effectiveness (n = 7) | CFIR, RE-AIM, AEM, DHS, PACE, HF, mHAT | 21–24,29,34,35 |

| Desirability (n = 3) | CFIR, RE-AIM, KF | 21,32,33 |

| Maintenance and servicing (n = 3) | CFIR, RE-AIM, KF | 21–33 |

| Feasibility of implementation (n = 2) | CFIR, RE-AIM | 21,22 |

| Functionality aspect | ||

| Relevance of features (n = 5) | A-MARS, DHS, ESF, MES, mHAT | 25–28,35 |

| Functionalities definition (n = 3) | MARS, A-MARS, KF | 24,25,33 |

| Design | ||

| User interface and aesthetics (n = 7) | MARS, A-MARS, ESF, MES, HE4EH, HF, mHAT | 24,25,27,28,32,34,35 |

| Design consistent process (n = 4) | MARS, A-MARS, HE4EH, mHAT | 24,25,32,35 |

| A-MARS: Adapted Mobile App Rating Scale; EM: American Psychiatric Association’s App Evaluation Model; CFIR: Consolidated Framework for Implementation Research; DHS: Digital Health Scorecard; ESF: Enlight Suite Framework; FDA Pre-Cert: Food and Drug Administration Precertification Program; HE4EH: Heuristic Evaluation for mHealth Apps; HF: Haig Framework; MARS: Mobile App Rating Scale; MES: Modified Enlight Suite; mHAT: Mobile Health App Trustworthiness; mHeRIF: mHealth-eRecord Interoperability Framework; PACE: Population Access Costs Experiences; RE-AIM: Reach, Effectiveness, Adoption, Implementation, and Maintenance Framework. | ||

Ten Key Areas

Area. Data Governance: Data governance was the most consistently represented area (14/15 frameworks), with a strong focus on privacy and security (14/15) and interoperability (8/15). This highlights a key concern in healthcare for safeguarding data and digital system interoperability, which are often considered priorities.

Area 2. Health Impact: Although health impact was reported in most settings (13/15), the emphasis on criteria within this domain was divergent. Medical safety (8/15) and clinical effectiveness (5/15) were reported, but often less explicitly than technological security measures, suggesting that while outcome improvement is recognized as essential, it is not always systematically evaluated in the first place. Only four frameworks addressed strength of evidence, underscoring a gap in linking DHIs to robust empirical validation.

Areas 3 and 4. Usability and Information Management: Usability (12/15) and information management (12/15) were widely reported, yet with different emphases. Usability primarily focused on access and navigation (12/15), whereas more nuanced aspects such as understandability (3/15) and timeliness (2/15) were rarely mentioned, indicating a significant shortfall in evaluating usability holistically. Similarly, while credibility and scientific validity (7/15 each) appeared frequently in information management, advisory support (3/15) was less common, showing that frameworks stress content reliability but often neglect mechanisms for independent validation.

Area 5. Patient-Centered Care: Patient-centered care was addressed in 10 of the 15 frameworks, reflecting a growing recognition of patient preferences and active participation. Within this domain, criteria included users’ interactions (6/15) to support self-management, well-being (5/15) to encourage motivation, therapeutic alliance (4/15) to strengthen users’ trust, and personalized care (3/15) to ensure tailored, nondiscriminatory services. However, the relatively low emphasis on therapeutic alliance and personalized care suggests that relational and human-centered aspects receive less attention and are not systematically assessed in most frameworks.

Area 6. Global Context: The global context domain was addressed in 9 of 15 frameworks, highlighting recognition of the broader ecosystem in which DHIs operate. Legal and ethical conduct were frequently emphasized (9/15 each), reflecting widespread concern with patient rights, data protection, and adherence to core ethical principles. In contrast, social conduct was rarely included (3/15), suggesting that considerations of social networks and stakeholder engagement remain underrepresented. Moreover, frameworks differed in how they positioned these criteria, some treating them as part of governance, others framing them within societal or cross-border contexts, indicating a lack of consensus on where responsibility for ethical and legal oversight resides in digital health evaluation.

Areas 7 and 8. Technical Aspect and Sustainability: Technical aspects appeared in 8 of the 15 frameworks, most commonly focusing on technical reliability, defined as stability and low risks of breakdowns, and technical support, such as immediate assistance (3/15 each). Company credentials, defined as the developer’s identity and competencies, were addressed in only 2 of the 15 frameworks. These findings suggest that HPs tend to prioritize operational stability and reliability of DHIs, which are key for trust and long-term sustainability. The limited attention to company credentials may reflect an assumption that reliability and compliance standards implicitly ensure developer credibility.

Sustainability, which ensures the long-term functionality and viability of DHIs, was also reported in 8 of 15 frameworks. Cost-effectiveness (7/15) was most frequently considered, while desirability and maintenance (3/15 each) and feasibility of implementation (2/15) were less often addressed, suggesting that frameworks focus more on immediate economic evaluation than on practical implementation, ongoing performance, or user appeal. Policymakers are highly sensitive to costs. Demonstrating cost-effectiveness is often a prerequisite for DHIs adoption and reimbursement, so frameworks naturally emphasize it.

Area 9. Functionality Aspect: The functional aspect was addressed in 7 of 15 frameworks, focusing on how DHIs achieve their intended goals. Relevance of features (5/15) assessed usefulness and performance in meeting patient needs, while functionality definition (3/15) outlined purpose, objectives, and expected outcomes. The relatively low emphasis on defining functionalities suggests that frameworks may prioritize feature performance over clarity of purpose and expected impact.

Area 1. Design: Design was addressed in 7 of 15 frameworks, emphasizing user interface and aesthetics (7/15), covering layout, color, and overall visual appeal and design consistency (6/15), ensuring a unified and intuitive experience across interfaces. Evaluating design often requires resource-intensive methods, which may explain why it is not systematically assessed, despite its potential impact on user engagement and health outcome.

Although these categorizations were broadly consistent, the emphasis on certain criteria over others varied across studies, reflecting both convergence and fragmentation in how DHIs for HPs are evaluated.

Quality Assessment of Selected Studies

The quality assessment of the selected studies, conducted using the CASP19 and the JBI Checklist,20 revealed that three studies had unclear recruitment strategies, three did not specify ethical considerations, two lacked sufficient details on data collection, and one did not provide clarity on data analysis. No studies were excluded to maximize data collection.

Discussion

Principal Findings

Our study identified a set of criteria to assess DHIs focusing on HPs, highlighting the importance of many factors and dimensions alongside clinical outcomes.

With regard to data governance, privacy and security emerge as pivotal dimensions to assess DHIs, playing a central role in fostering user trust in DHIs. This aligns with previous findings highlighting privacy concerns as a major barrier to adoption.1,10,36 While data interoperability is crucial for integrating DHIs into traditional health systems and for reducing duplication, effort, and omissions in data entry by HPs, its limited and inconsistent consideration in evaluation frameworks underscores broader challenges in the digital transformation of healthcare. The lack of interoperability may contribute to fragmented health information systems, hindering the delivery of coordinated and equitable care.10,37

Similarly, this review highlights that health impact, including medical safety, clinical effectiveness, and the strength of evidence, remains a critical set of factors in the evaluation of DHIs. While DHIs hold considerable clinical potential in the management of chronic conditions, particular attention must be given to safety, effectiveness, and the strength of supporting evidence.38 However, the rapid pace of innovation often outstrips rigorous validation, raising the risk of adopting ineffective or even harmful tools within health systems. Striking a balance between innovation, patient safety, and clinical effectiveness is therefore essential to ensure the sustainable integration of DHIs into healthcare delivery.

In parallel, our findings on usability, encompassing access, navigation, understandability, and timeliness align with previous reviews indicating that DHIs are more likely to be adopted when they are easy to use, present content that is easy to understand, functional under low internet bandwidth, and are capable of providing a rapid response.28,39 In healthcare, these characteristics are essential for effective clinical decision-making and efficient workflow, particularly in emergency care settings.8,28,35

Furthermore, our findings highlight the need to evaluate the field of information management, particularly addressing credibility of content, scientific validity, and advisory support. This aligns with previous reviews highlighting that the availability of reliable information is essential to maintain user trust.35 Some DHIs lack clinical standards and may provide misleading content, emphasizing the need to validate information through trusted experts and involve independent medical committees to ensure high-quality, evidence-based solutions.25

Moreover, patient-centered care, including user interaction, well-being, and personalized care, was highlighted in the reviewed studies. This aligns with previous literature emphasizing the critical role of user engagement, sustained behavior change, and active patient involvement in the use of DHIs.11 The therapeutic alliance, observed in our study, further underscores the importance of integrating DHIs into the care pathway to prevent care fragmentation, maintain continuity of health services, and facilitate shared decision-making.23

In addition, the global context, including legal, ethical, and social conduct, was identified as a key domain for assessing DHIs targeting HPs. Lack of patient data regulations of DHIs may hinder adoption, whereas supportive policies facilitate it, as shown in previous work.23 Another study has shown that support from healthcare organizations and stakeholders further promotes DHI uptake.36 Additionally, DHIs design should take into account users’ cultural, social, and linguistic contexts to help bridge the digital divide and enhance digital literacy.11,21

Technical aspects were addressed in several studies, mainly focusing on reliability and technical support. Weak technical infrastructure is reported to delay care delivery, frustrate users, reduce adoption, and limit recruitment.36 It is also important to assess developer credentials as key criteria for ensuring trustworthy DHIs.10,30

Regarding the domain of sustainability and its associated criteria, our results are consistent with previous literature. Prior work indicates that sustainability depends on secure funding, resource availability, and continuous training and that DHIs that are regularly updated are more likely to be adopted and sustained over time.10,36,39

Concerning functionality, our findings highlighted the importance of assessing and defining relevant features and functionalities. Understanding the needs and available resources of DHIs users is essential for defining appropriate functions, which may operate synchronously or asynchronously. Prior research has demonstrated that DHIs are complex interventions with multiple components that fulfill a range of functionalities, which must be relevant.39 In this context, features such as voice assistants, alerts, and notifications can further enhance user communication, engagement, and adherence.40 It is worth noting that functionality is frequently associated with technical aspects.40

Finally, our review emphasized the significance of evaluating the design dimension of DHIs for health professionals, particularly user interface aesthetics and design consistency. Similar studies confirm that engaging and consistently unified design, along with gamification, can increase sustained use of DHIs.25,40 Allowing users to personalize features such as text size or colors further enhances accessibility and inclusivity.7 In contrast, poor design choices, including bright flashing colors, may deter DHI users or even trigger health risks in vulnerable groups.7 Therefore, integrating user-centered design principles is essential to improve user satisfaction and promote long-term adherence.40

Towards a Framework for DHI Assessment

Despite numerous frameworks, there is no standardized or transparent approach and no consensus on essential criteria for assessing DHIs.11,25 Selecting DHI remains complex due to limited practical guidance.29 To the best of our knowledge, no existing framework fully addresses all relevant domains for evaluating DHIs. Current approaches remain limited: some focus primarily on data governance and usability, while others emphasize clinical outcomes, technical aspects, or sociocultural factors . Based on the findings of this review, we derived a unified framework (Figure 3) that integrates interdependent criteria and areas for assessing DHIs focusing on HPs.

Fig. 3. Assessment framework for DHIs focusing on HPs. DHIs: digital health interventions; HPs: healthcare providers; DHS: Digital Health Scorecard.

It includes 29 criteria grouped into 10 key areas, all of which should be systematically assessed, namely: data governance, usability, information management, health impact, patient-centered care, technical aspects, global context, functionality, sustainability, and design. This multidimensional framework offers a holistic lens to assess DHIs focusing on HPs, providing a structured basis to guide their design, implementation, and adoption, thereby facilitating their safer, more effective, and sustainable integration into clinical practice.

The proposed framework is designed to be adaptable across a wide range of healthcare settings and contexts. In low-resource environments, its application can prioritize feasibility, infrastructure availability, and cost-effectiveness, with greater weighting given to interoperability, ease of use, and minimal maintenance requirements. In different regulatory contexts, the framework can be adjusted to align with local policies, data protection laws, and ethical standards, emphasizing governance, privacy, and compliance criteria.

Beyond these, several other dimensions may influence its adaptation. In urban versus rural settings, differences in connectivity and digital literacy may shift the focus towards usability and offline accessibility. Across public and private health systems, weighting may reflect different governance priorities, with clinical effectiveness and user experience emphasized in private settings and usability and population coverage prioritized in public systems.

Similarly, the framework can be tailored to different levels of the health system, from primary care to specialized hospitals or community-based programs, by emphasizing contextually relevant areas such as health impact, information management, sustainability, or patient-centered care. The framework can be applied across diverse contexts, including multicultural and multilingual settings, where cultural, social, and linguistic adaptabilities, as well as personalization, are crucial to ensure the acceptability of DHIs. It also accommodates varying levels of digital maturity, emphasizing technical robustness, advisory support, and interoperability in advanced settings while prioritizing training and capacity building in emerging environments.

Moreover, it can remain relevant in emergency or humanitarian contexts, where minimal infrastructure requirements and ease of implementation and maintenance are critical. Its application may extend across diverse health domains, including maternal health, mental health, chronic disease management, and adolescent health, each requiring careful consideration of content credibility, medical safety, and functional performance.

Finally, it is important to acknowledge that this framework was derived from a synthesis of existing literature and has not yet undergone empirical validation. To address this, we plan to conduct a Delphi study involving experts in digital health, healthcare delivery, and health policy. This study will assess the clarity, relevance, and applicability of each area and criterion through multiple rounds aimed at reaching consensus on the most essential assessment elements. The results will inform a pilot phase to test the framework in real healthcare settings. Furthermore, the weighting of criteria will be refined as part of this participatory process to reflect stakeholder priorities and contextual specificities. This iterative approach will ensure that the framework remains scientifically robust, context sensitive, and adaptable across healthcare environments, supporting sustainable integration of DHIs into practice.

Strengths and Limitations

This study has several strengths, notably its foundation in a comprehensive scoping review to examine the assessment of DHIs focusing on HPs. The assessment criteria and areas within our framework were informed by recent and existing models developed in different countries for various healthcare fields, which strengthens its theoretical foundation. Given that this framework provides a holistic, comprehensive, and user-friendly structure integrating multiple evaluation dimensions, it emerges as a living tool, designed to evolve alongside emerging technologies.

The study has several limitations. Its 5-year scope might have excluded some recent data. The categorization of assessment criteria and the selection of thematic areas might have introduced subjectivity, resulting in variable outcomes across reviewers. Focusing primarily on HPs may have limited the frameworks and criteria identified. Moreover, only English-language articles were included, and gray literature was excluded, which may have led to the omission of some studies. Finally, the framework lacked empirical validation, and key stakeholders, technology developers, and patients were not directly involved in our study.

Conclusions

Our study mapped and synthesized the existing literature on the assessment of DHIs to support HPs’ decision-making. We derived a framework of 29 interdependent assessment criteria across 10 key areas: usability, design, technical aspects, functionality, information management, health impact, patient-centered care, sustainability, global context, and data governance, providing a comprehensive and structured framework. This framework offers a robust tool to guide the assessment and integration of DHIs in diverse healthcare settings. Our future work, together with other forthcoming studies, will empirically validate this framework in real-world settings and assess its applicability, impact, and safe integration of DHIs focusing on HPs through active collaboration with academics, policymakers, patients, and technology developers.

Contributors

Drs. El Kouarty and El Hilali conceptualized the study and collected and analyzed the data. Dr. Sahnoun contributed to study design and manuscript review. Dr. Obtel performed data analysis and reviewed the manuscript. All authors edited and approved the final submitted version.

Data Availability Statement (Das), Data Sharing, Reproducibility, and Data Repositories

Data or references and links to secondary data sets are contained within the published work. The authors are pleased to discuss the data, including information on the PRISMA-ScR checklist, the screening process, phases of thematic analysis after Braun and Clarke, the CASP and JBI checklist, a summary of frameworks, assessment and frameworks in which they appeared, framework × criteria matrix, and quality assessment of selected studies.

Application of Ai-Generated Text or Related Technology

No generative AI technologies were used during the writing or editing of the manuscript.

References

- The fifty-eighth World Health Assembly [Internet]. WHA 58/28 Document. Geneva: World Health Organization; 2005 [cited 2024 Feb 15]. Available from: https://apps.who.int/gb/ebwha/pdf_files/WHA58REC1/english/A58_2005_REC1-en.pdf

- Classification of digital health interventions [Internet]. WHO/RHR/18.06. Geneva: World Health Organization; 2018.

- Fifty-eighth World Health Assembly [Internet]. eHealth Report A58/21. Geneva: World Health Organization; 2005 [cited 2024 Feb 15]. Available from: https://apps.who.int/gb/archive/pdf_files/WHA58/A58_21-en.pdf

- Magnol M, Eleonore B, Claire R, et al. Use of eHealth by patients with rheumatoid arthritis: observational, cross-sectional, multicenter study. J Med Internet Res. 2021;23(1):e19998. https://doi.org/10.2196/19998

- Knitza J, Simon D, Lambrecht A, et al. Mobile health usage, preferences, barriers, and eHealth literacy in rheumatology: patient survey study. JMIR Mhealth Uhealth. 2020;8(8):e19661. https://doi.org/10.2196/19661

- Perrin Franck C, Babington-Ashaye A, Dietrich D, et al. iCHECK-DH: guidelines and checklist for the reporting on digital health implementations. J Med Internet Res. 2023;25:e46694. https://doi.org/10.2196/46694

- Jacob C, Lindeque J, Müller R, et al. A sociotechnical framework to assess patient-facing eHealth tools: results of a modified Delphi process. NPJ Digit Med. 2023;6(1):232. https://doi.org/10.1038/s41746-023-00982-w

- The ongoing journey to commitment and transformation: digital health in the WHO European Region, 2023. Copenhagen: WHO Regional Office for Europe; 2023.

- Hongsanun W, Insuk S. Quality assessment criteria for mobile health apps: a systematic review. Walailak J Sci Technol. 2020;17(8):745–59. https://doi.org/10.48048/wjst.2020.6482

- Jacob C, Lindeque J, Klein A, et al. Assessing the quality and impact of eHealth tools: systematic literature review and narrative synthesis. JMIR Hum Factors. 2023;10:e45143. https://doi.org/10.2196/45143

- Ribaut J, DeVito Dabbs A, Dobbels F, et al. Developing a comprehensive list of criteria to evaluate the characteristics and quality of eHealth smartphone apps: systematic review. JMIR Mhealth Uhealth. 2024;12:e48625. https://doi.org/10.2196/48625

- Onukwugha FI, Smith L, Kaseje D, et al. The effectiveness and characteristics of mHealth interventions to increase adolescents’ use of sexual and reproductive health services in sub-Saharan Africa: a systematic review. PLoS One. 2022;17(1):e0261973. https://doi.org/10.1371/journal.pone.0261973

- Fadahunsi KP, O’Connor S, Akinlua JT, et al. Information quality frameworks for digital health technologies: systematic review. J Med Internet Res. 2021;23(5):e23479. https://doi.org/10.2196/23479

- Munn Z, Pollock D, Khalil H, Alexander L, McInerney P, Godfrey CM, et al. What are scoping reviews? Providing a formal definition of scoping reviews as a type of evidence synthesis. JBI Evid Synth. 2022;20(4):950–2. https://doi.org/10.11124/JBIES-21-00483

- Arksey H, O’Malley L. Scoping studies: towards a methodological framework. Int J Soc Res Methodol. 2005;8(1):19–32. https://doi.org/10.1080/1364557032000119616

- Moher D, Liberati A, Tetzlaff J, et al. Preferred reporting items for systematic reviews and meta-analyses: the PRISMA statement. PLoS Med. 2009;6(7):e1000097. https://doi.org/10.1371/journal.pmed.1000097

- Shetty J, Shetty A, Mundkur SC, et al. Economic burden on caregivers or parents with Down syndrome children: a systematic review protocol. Syst Rev. 2023;12:3. https://doi.org/10.1186/s13643-022-02165-2

- Braun V, Clarke V. Using thematic analysis in psychology. Qual Res Psychol. 2006;3(2):77–101. https://doi.org/10.1191/1478088706qp063oa

- Critical Appraisal Skills Program. CASP qualitative checklist [Internet]. 2018 [cited 2024 Mar 15]. Available from: https://casp-uk.net/casp-tools-checklists/

- JBI. JBI’s critical checklist for quasi-experimental studies [Internet]. [cited 2024 Mar 20]. Available from: https://jbi.global/critical-appraisal-tools

- El Joueidi S, Bardosh K, Musoke R, et al. Evaluation of the implementation process of the mobile health platform “WelTel” in six sites in East Africa and Canada using the modified consolidated framework for implementation research (mCFIR). BMC Med Inform Decis Mak. 2021;21:293. https://doi.org/10.1186/s12911-021-01644-1

- Arrossi S, Paolino M, Orellana L, et al. Mixed-methods approach to evaluate an mHealth intervention to increase adherence to triage of human papillomavirus-positive women who have performed self-collection (the ATICA study): study protocol for a hybrid type I cluster randomized effectiveness-implementation trial. Trials. 2019;20:148. https://doi.org/10.1186/s13063-019-3229-3

- Lagan S, Aquino P, Emerson MR, et al. Actionable health app evaluation: translating expert frameworks into objective metrics. npj Digit Med. 2020;3:100. https://doi.org/10.1038/s41746-020-00312-4

- Jones C, O’Toole K, Jones K, et al. Quality of psychoeducational apps for military members with mild traumatic brain injury: an evaluation utilizing the Mobile Application Rating Scale. JMIR Mhealth Uhealth. 2020;8(8):e19807. https://doi.org/10.2196/19807

- Roberts AE, Davenport TA, Wong T, et al. Evaluating the quality and safety of health-related apps and e-tools: adapting the Mobile App Rating Scale and developing a quality assurance protocol. Internet Interv. 2021;24:100379. https://doi.org/10.1016/j.invent.2021.100379

- Sedhom R, McShea MJ, Cohen AB, et al. Mobile app validation: a digital health scorecard approach. npj Digit Med. 2021;4:111. https://doi.org/10.1038/s41746-021-00476-7

- Tan YY, Woulfe F, Chirambo GB, et al. Framework to assess the quality of mHealth apps: a mixed-method international case study protocol. BMJ Open. 2022;12:e062909. https://doi.org/10.1136/bmjopen-2022-062909

- Woulfe F, Fadahunsi KP, O’Grady M, et al. Modification and validation of an mHealth app quality assessment methodology for international use: cross-sectional and eDelphi studies. JMIR Form Res. 2022;6(8):e36912. https://doi.org/10.2196/36912

- Little LM, Pickett KA, Proffitt R, et al. Keeping pace with 21st century healthcare: a framework for telehealth research, practice, and program evaluation in occupational therapy. Int J Telerehabil. 2021;13(1):e6379. https://doi.org/10.5195/ijt.2021.6379

- Alon N, Stern AD, Torous J. Assessing the Food and Drug Administration’s risk-based framework for software precertification with top health apps in the United States: quality improvement study. JMIR Mhealth Uhealth. 2020;8(10):e20482. https://doi.org/10.2196/20482

- Ndlovu K, Mars M, Scott RE. Validation of an interoperability framework for linking mHealth apps to electronic record systems in Botswana: expert survey study. JMIR Form Res. 2023;7:e41225. https://doi.org/10.2196/41225

- Khowaja K, Al-Thani D. New checklist for the heuristic evaluation of mHealth apps (HE4EH): development and usability study. JMIR Mhealth Uhealth. 2020;8(10):e20353. https://doi.org/10.2196/20353

- Vokinger KN, Nittas V, Witt CM, et al. Digital health and the COVID-19 epidemic: an assessment framework for apps from an epidemiological and legal perspective. Swiss Med Wkly. 2020;150:w20282. https://doi.org/10.4414/smw.2020.20282

- Haig M, Main C, Chávez D, et al. A value framework to assess patient-facing digital health technologies that aim to improve chronic disease management: a Delphi approach. Value Health. 2023;26(10):1474–84. https://doi.org/10.1016/j.jval.2023.06.008

- van Haasteren A, Vayena E, Powell J. The mobile health app trustworthiness checklist: usability assessment. JMIR Mhealth Uhealth. 2020;8(7):e16844. https://doi.org/10.2196/16844

- Jahnel T, Pan C-C, Pedros Barnils N, Muellmann S, Freye M, Dassow H-H, et al. Developing and evaluating digital public health interventions using the Digital Public Health Framework DigiPHrame: a framework development study. J Med Internet Res. 2024;26:e54269. https://doi.org/10.2196/54269

- Davis MM, Freeman M, Kaye J, et al. A systematic review of clinician and staff views on the acceptability of incorporating remote monitoring technology into primary care. Telemed J E Health. 2014;20(5):428–38. https://doi.org/10.1089/tmj.2013.0166

- Moharra M, Almazán C, Decool M, et al. Implementation of a cross-border health service: physician and pharmacists’ opinions from the epSOS project. Fam Pract. 2015;32(5):564–7. https://doi.org/10.1093/fampra/cmv052

- Rouleau G, Wu K, Ramamoorthi K, Boxall C, Liu RH, Maloney S, et al. Mapping theories, models, and frameworks to evaluate digital health interventions: scoping review. J Med Internet Res. 2024;26:e51098. https://doi.org/10.2196/51098

- Weirauch V, Soehnchen C, Burmann A, Meister S. Methods, indicators, and end-user involvement in the evaluation of digital health interventions for the public: scoping review. J Med Internet Res. 2024;26:e55714. https://doi.org/10.2196/55714

Copyright Ownership: This is an open-access article distributed in accordance with the Creative Commons Attribution Non-Commercial (CC BY-NC 4.0) license, which permits others to distribute, adapt, enhance this work non-commercially, and license their derivative works on different terms, provided the original work is properly cited and the use is non-commercial. See http://creativecommons.org/licenses/by-nc/4.0. The authors of this article own the copyright.